Before You Buy a Cellular Antenna, Do This First

Every week, someone contacts me with a version of the same request. “My router is slow. I need…

In a recent piece on this site I wrote about the Cheugugi – the national rainfall measurement network that Joseon Korea built and operated from 1441. The reason it is worth knowing about is not the instrument itself. It is the discipline behind it. They measured rainfall because governance depended on knowing the answer. The data had a job to do. Every gauge, every reading, every record existed in service of a specific and consequential question: how much rain fell, where, and what does that mean for the harvest?

I have spent 25 years in the IoT and telecoms industry. I have sold the hardware, designed the networks, and watched the sector evolve from basic M2M telemetry into something that now generates data at a scale that is genuinely difficult to comprehend. And the question the Cheugugi keeps raising in my mind is the one the IoT industry has largely avoided asking itself seriously: why are we collecting this, and what is it actually for?

Before we can have a sensible conversation about whether IoT data is useful, it helps to be clear about what it is and how it arrives.

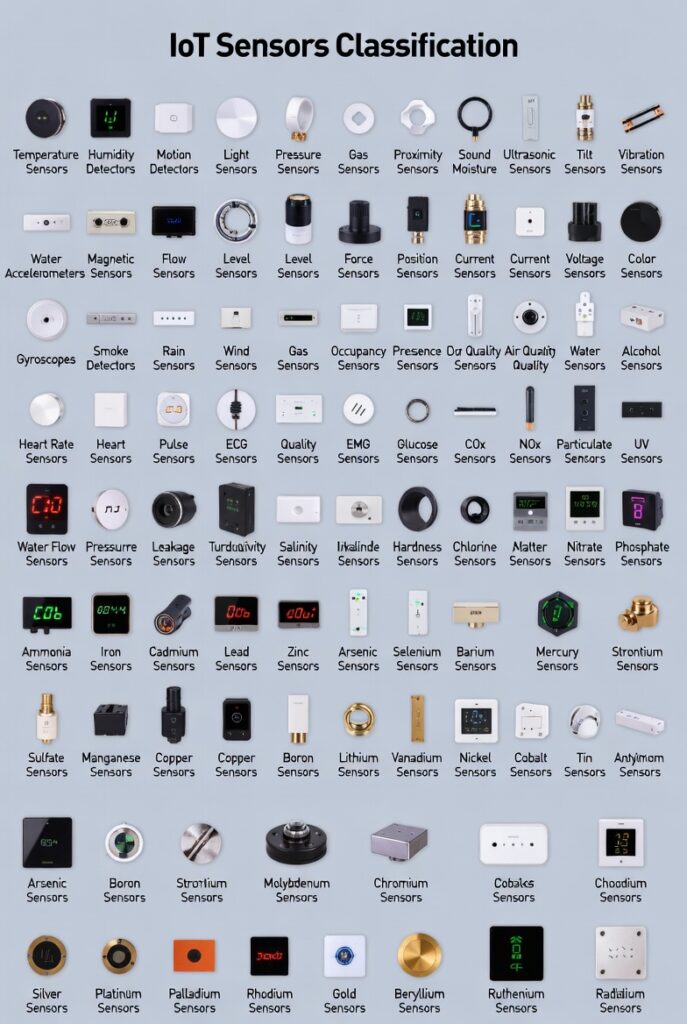

An IoT device is, at its simplest, a thing with a sensor and a communications link. The sensor reads a physical condition – temperature, pressure, motion, voltage, location, humidity, vibration, flow rate, luminosity – and the communications link sends that reading somewhere. Everything else is detail.

The collection method depends on the application. Industrial sensors on a gas pipeline may report continuously via cellular or fixed-line connections. A smart electricity meter may batch its readings and transmit them once every 30 minutes over a mesh radio network. A GPS asset tracker may send a position fix every few seconds when moving and go quiet for hours when stationary. A soil moisture sensor in precision agriculture may take a reading once per hour and hold it until a data aggregator passes by on a scheduled collection cycle.

The data goes somewhere: a cloud platform, an on-premise server, a data warehouse, or increasingly a combination of edge processing and cloud storage. Edge processing means the device or a nearby gateway does initial analysis before transmitting – sending an alert rather than a raw stream, which saves bandwidth and reduces latency. Cloud storage means the raw or processed readings end up in a database that can be queried, visualised, or fed into further analysis.

What gets stored varies enormously. Some deployments store everything indefinitely. Some store summaries and discard the underlying readings after a short window. Some operators honestly have no clear retention policy at all – the data accumulates because nobody has made a decision about what to keep and why.

This last point matters more than it sounds.

The global IoT estate is now measured in billions of connected devices. Various estimates place the number somewhere between 15 and 18 billion active endpoints as of the mid-2020s, with projections running to 30 billion or more by the end of the decade. Each of those devices is, in principle, generating data. Some are generating a lot of it.

To put that in perspective: a single cellular router deployed in an industrial environment, logging signal quality metrics, connected device counts, data throughput, temperature, and GPS position every 60 seconds, generates roughly 1.4 million data points per year. Multiply that across a fleet of a thousand routers and you have 1.4 billion data points annually from one deployment, measuring one application, for one operator.

Now think about the aggregate. Smart cities with thousands of sensors. Vehicle fleets with continuous telematics. Energy grids with sub-second metering at millions of points. Consumer wearables worn by hundreds of millions of people, logging heart rate, sleep, steps, and location continuously.

The volume of IoT data being generated globally is not just large. It is, in practical terms, unmeasurable in any meaningful aggregate sense. And a significant fraction of it is never looked at by a human being, never used in a decision, never referenced in any output, and never deleted. It simply accumulates in storage systems that keep getting bigger.

That is not data. That is digital landfill.

There are applications where IoT data collection is not just justifiable but important. These tend to share a common characteristic: the data has a specific decision attached to it, and the decision would be worse without the data. That is the Cheugugi test. Does the data have a job?

Predictive maintenance in critical infrastructure. Vibration sensors on industrial rotating equipment – turbines, pumps, compressors – can detect the early signatures of bearing failure weeks before catastrophic breakdown. A pump failing unexpectedly in a water treatment facility is not an inconvenience. It is a service disruption affecting a population. The maintenance data does not just save the operator money. It keeps the infrastructure working. This is IoT data with clear, direct, measurable value.

Energy grid management. Smart meters and grid sensors enable demand response: the ability to balance supply and consumption in near real-time rather than running excess generation capacity as a buffer. As renewable generation grows and the grid becomes less predictable, this real-time visibility is genuinely important. The data enables decisions that would otherwise require much cruder and more wasteful approaches.

Agricultural water management. Soil moisture sensors combined with weather data can reduce irrigation water use significantly while maintaining or improving yields. In regions where water scarcity is a real constraint, this is not a marginal efficiency gain. It matters. The sensor is doing the job the Joseon magistrate’s gauge did: measuring the actual condition of the ground so that a decision can be made on the basis of what is real rather than what is assumed.

Cold chain monitoring. Temperature and humidity logging throughout the pharmaceutical and food supply chain ensures that products requiring controlled conditions have actually experienced those conditions from manufacture to delivery. A vaccine that has been temperature-excursed is not just a waste of money. It is a clinical risk. The sensor data provides verifiable proof of chain integrity.

Structural health monitoring. Accelerometers and strain gauges on bridges, dams, and buildings provide early warning of structural change. A bridge that is developing a problem does not announce it visually until very late in the deterioration process. The sensor record shows the trajectory long before the failure.

These examples share the same property. There is a question. The sensor answers it. The answer changes a decision. The changed decision produces a better outcome than the unanswered question would have.

The worst IoT deployments are not the ones that fail technically. The worst ones are the ones that succeed technically and accomplish nothing of value, while creating costs, risks, and a false sense of capability.

Consumer surveillance dressed as convenience. A refrigerator that monitors its contents and sends the data to a manufacturer’s cloud platform is not solving a problem that existed. The person buying groceries knew what was in their fridge. What has actually happened is that the manufacturer has acquired a data stream about household consumption patterns that can be sold to third parties, used for targeted advertising, or retained indefinitely without the user having any practical understanding of what has been collected. The “smart” feature is the commercial value proposition, not the consumer value proposition. These are not the same thing.

Smart city sensor networks without governance. Many smart city deployments have installed sensor infrastructure – cameras, environmental monitors, pedestrian counters, noise sensors – without clear data governance frameworks, retention policies, or public accountability for how the data is used. The technology exists. The data flows. The question of what it is for and who controls it is often answered after the fact, if at all. Sensors in public spaces collecting data about people’s movements and behaviour are not neutral infrastructure. They are surveillance infrastructure, and treating them as equivalent to a rain gauge is intellectually dishonest.

Industrial IoT deployments that generate dashboards nobody reads. A significant fraction of industrial IoT implementations produce visualisations that look impressive in procurement presentations and are checked approximately never in operation. The alerts fire. Nobody responds. The trends accumulate. No decision is ever changed by the data because no workflow was ever built around it. The data collection succeeded. The data use was never designed. This is common, it is expensive, and the industry does not talk about it enough.

Consumer health wearables collecting data with no clinical validation. A device that measures something – heart rate variability, blood oxygen, sleep stages, stress indicators – and presents that measurement as health insight is not the same as a clinically validated medical instrument. Many consumer wearables collect data that sounds meaningful, generates anxiety in users, produces false positives and negatives, and is not validated against any clinical outcome. The data is plentiful. The value is questionable. The potential for harm, particularly in users who respond to readings by altering behaviour or seeking unnecessary medical intervention, is real.

Does the IoT industry collect too much data? Almost certainly yes, by any reasonable definition of “too much” that includes the idea that collected data should have a purpose.

The problem is not technical. Storage is cheap. Transmission is cheap. Sensors are cheap. The marginal cost of collecting one more data point approaches zero in most deployments. When the cost of collection is effectively zero, the discipline to ask whether you should collect something atrophies. You collect it because you can. You store it because you might need it. The question of whether it serves any purpose gets deferred indefinitely.

This is the opposite of how the Joseon network worked. Every gauge cost something to manufacture, deploy, and maintain. Every reading required an official’s time. The archive required storage and administration. The system was built around a clear purpose, and the cost of measurement enforced a discipline about what was worth measuring.

When measurement costs nothing, that discipline disappears. And the result is not better data. It is more data of lower utility, surrounding and often obscuring the smaller amount of data that actually matters.

There is also a risk dimension that the industry underweights. Data that is collected and stored is data that can be breached, subpoenaed, misused, or sold. A fleet of agricultural sensors recording field conditions carries minimal privacy risk. A network of consumer devices recording location, behaviour, and biometrics continuously carries substantial risk. The question is not just whether the data is useful. It is whether the risk of holding it is proportionate to the value it provides.

What it gets right: the genuinely useful applications are genuinely useful. Predictive maintenance, grid management, precision agriculture, cold chain, structural monitoring – these are real problems being measurably improved by sensor data. The technology works. The connectivity infrastructure supporting it has improved dramatically over the past decade. The cost and scale economics make it possible to deploy sensor networks that would have been prohibitively expensive a generation ago.

What it gets wrong: the industry has a persistent tendency to conflate data collection with insight, and insight with value. The pipeline from sensor to dashboard to decision is presented as a natural progression, but in practice the middle and final stages are where most deployments break down. Collecting data is easy. Asking what decision it should change, building the workflow that allows it to change that decision, and measuring whether the outcome improved – that is hard, and it is what most IoT project budgets do not cover.

The industry also has a marketing problem that bleeds into a credibility problem. When every kettle with a WiFi chip is presented as “smart infrastructure” and every data logger is presented as an “AI-enabled insight platform,” the language loses meaning. The serious applications – the ones where sensor data is doing important work – get buried under a pile of consumer gadgetry and enterprise slideware. That makes it harder to have honest conversations about where the technology actually delivers and where it does not.

What would it look like to apply Joseon Korea’s discipline to modern IoT data collection?

It would look like this: before deploying sensors, identify the specific decision the data will inform. Define what a good outcome looks like and how the data will contribute to it. Collect the data required for that decision and not substantially more. Retain it for as long as it remains useful to that decision and not substantially longer. Evaluate whether the data is actually changing decisions and improving outcomes, and stop collecting it if the answer is consistently no.

That is not a technically difficult standard. It is a governance standard. It requires asking why before asking how, and treating the answer to why as a constraint rather than a suggestion.

The Cheugugi was not impressive because Korea had good gauges. It was impressive because Korea had a reason to measure, a method for measuring consistently, and an institutional commitment to using the result. The modern IoT industry has the first two. The third – the genuine commitment to using data to answer real questions rather than to generate the appearance of analytical sophistication – is the one that most deployments still need to earn.

The rain gauge does not care what answer you want. That is why it is useful.

Nick Appleby has 25+ years in the telecoms and IoT industry. The Cheugugi article referenced in the opening is available elsewhere on this site.