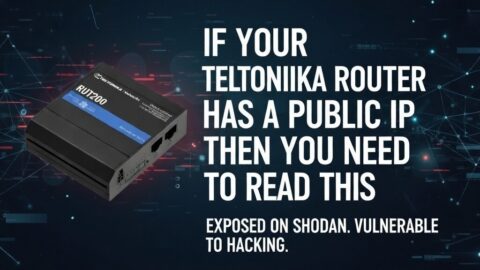

Your Teltonika Router Has a Public IP SIM. Here Is Why That Is a Problem – and How to Fix It.

Hundreds of thousands of Teltonika routers are deployed across the UK with a public IP SIM inserted and…

In security, zero trust is a framework. In life, it is a warning. The principles are the same. The stakes are higher.

The term “zero trust” was coined in 2010 by a Forrester analyst called John Kindervag. He was describing a problem that had plagued enterprise networking for decades: the assumption that anything inside the network perimeter could be trusted. That assumption, he argued, was the single most dangerous flaw in how organisations thought about security.

He was right. And the lesson he was teaching was not really about networking at all.

Before we get to zero trust as a technical model, it is worth asking the question that most security professionals skip over: what is trust, actually?

Trust is a calculated risk. It is the decision to make yourself vulnerable to another party – a person, a device, a system, an organisation – based on your expectation of how they will behave. It is not blind faith. It is not certainty. It is a working assumption that holds until the evidence changes.

Trust is earned incrementally and lost suddenly. That asymmetry is important. It takes months or years to build a reputation for reliability, and it can be destroyed in a single moment of misalignment between what someone said they would do and what they did, or did not do.

“Trust takes years to build, seconds to break, and forever to repair.” The origin of that line is disputed. The truth of it is not.

In both networks and human relationships, the question is never whether to trust or not to trust. The question is how to calibrate that trust appropriately – and what to do when it breaks.

The traditional approach to network security was built on the concept of a perimeter. You drew a line around your organisation – physically, logically, digitally – and you assumed that anything inside that line was safe. Users inside the office, devices on the corporate network, systems behind the firewall: all trusted by default.

The problem is that this model was always a fiction. It assumed a clean boundary between inside and outside that the modern world simply does not support. Remote workers, cloud services, IoT devices, third-party integrations, supply chain access – all of these punch holes through the perimeter long before any attacker does.

In the old model, once you were in, you were in. Lateral movement inside the network was trivially easy. A single compromised device could reach everything. The 2013 Target breach – where attackers entered through an HVAC contractor’s credentials and reached payment systems – is the textbook example. The perimeter held. The interior was wide open.

Zero trust inverts the assumption. Nothing is trusted by default – not users, not devices, not applications, not network traffic – regardless of where it originates. Every access request is authenticated, authorised, and validated in context, every single time.

The core principles of zero trust, as defined by NIST and widely adopted across the industry, are straightforward:

Always authenticate and authorise based on all available data points – identity, location, device health, service or workload, data classification, and anomalies.

Limit user access with just-in-time and just-enough-access principles. Minimise the blast radius of any single compromise.

Design systems as if an attacker is already inside. Minimise lateral movement, segment access, encrypt everything, and monitor continuously.

Trust is not granted once and forgotten. It is re-evaluated continuously based on behaviour, context, and changing conditions.

Zero trust is not a product you can buy. It is an architecture, a philosophy, and an operational discipline. And in the IoT world, where the attack surface has exploded to include thousands of edge devices across distributed infrastructure, it has moved from best practice to necessity.

IoT introduces a category of devices that traditional security models were never designed to handle. A cellular router on a remote energy site, a sensor array in a smart building, a fleet of connected vehicles – these are not managed endpoints with installed agents and regular patch cycles. They are constrained devices, often with minimal processing power, long deployment lifespans, and limited visibility.

In an IoT context, zero trust manifests as a set of architectural choices:

Every device must have a verifiable identity before it is permitted to communicate with any other system. Certificate-based authentication, SIM-level identity, hardware security modules – the mechanism matters less than the principle: no device is trusted simply because it is present on the network. This is where technologies like eSIM and eUICC add genuine security value, binding device identity to the network profile in ways that are harder to spoof or transfer than a physical SIM.

Rather than allowing devices to communicate freely once connected, zero trust in IoT means isolating workloads and device groups. A temperature sensor in a manufacturing plant has no legitimate reason to reach the HR system. Segmenting at the network level ensures that a compromised sensor cannot become a pivot point into broader infrastructure.

A device that behaves differently from its baseline – unexpected traffic patterns, communication to unknown endpoints, unusual data volumes – should trigger re-validation, not passive acceptance. Zero trust treats a change in behaviour as a reason to recheck, not to assume.

Devices should have access only to what they need to perform their function. A remote monitoring unit does not need access to the full corporate network. It needs a path to its management platform and nothing else. Least privilege at the device level is both a security principle and a practical architecture constraint.

For most organisations, the first practical question when moving toward zero trust is what to do about remote access. For decades, the answer was a VPN – a virtual private network that extended the corporate perimeter to remote users by creating an encrypted tunnel back to the network. The problem is that traditional VPN deployments often grant broad network access once the user is connected. They are inside the perimeter. Everything is reachable. That is exactly the model zero trust is designed to replace.

Zero Trust Network Access (ZTNA) is the architectural answer. Instead of giving a remote user access to the network, ZTNA gives them access only to specific applications or services they are authorised to use – and only after their identity, device health, and context have been verified. The network itself remains invisible to them. They cannot scan it, probe it, or move laterally through it. They get a path to what they need and nothing else.

The difference in practice is significant. With a traditional VPN, a compromised user credential means an attacker has the run of the internal network. With ZTNA, a compromised credential provides access only to the applications that user was permitted to reach – and continuous monitoring may detect anomalous behaviour and revoke even that before significant damage is done.

ZTNA is typically delivered in one of two ways. Agent-based ZTNA installs a lightweight client on the user’s device that handles authentication and policy enforcement locally before establishing a connection. Agentless ZTNA operates through a browser-based proxy, requiring no software installation – useful for contractor access or unmanaged devices where installing an agent is not practical.

Zero trust is not something you switch on. It is a direction of travel, and most organisations implement it in phases. The broad sequence looks like this:

The first step is deploying a robust identity and access management (IAM) system with multi-factor authentication (MFA) across all users and services. If you do not know with confidence who is requesting access at any given moment, everything else is built on sand. This typically means integrating a directory service – Microsoft Active Directory, Azure AD, Okta, or equivalent – with MFA enforced for all authentication events, not just logins from outside the office.

You cannot apply zero trust policies to devices you cannot see. A device inventory – understanding what is on the network, what state it is in, whether it is patched and compliant – is a prerequisite for making any access decision based on device health. Endpoint management platforms like Microsoft Intune, Jamf, or CrowdStrike Falcon handle this for traditional endpoints. For IoT devices, specialist platforms are needed, covered below.

Micro-segmentation divides the network into isolated zones, ensuring that even if one segment is compromised, an attacker cannot move freely into others. This can be implemented at the network layer through VLANs and firewall rules, or at a higher level through software-defined networking (SDN) platforms that apply policy regardless of the underlying physical topology. For IoT deployments, dedicated IoT network segments – isolated from corporate infrastructure by default – are a basic hygiene requirement.

Audit what access each user, service account, and device actually needs. In most organisations that have grown organically, this audit reveals significant over-provisioning – users with admin rights they were given once for a specific task and never had revoked, service accounts with broad permissions inherited from a configuration set up years ago. Ratcheting permissions down to the minimum required is tedious but foundational.

Zero trust without visibility is incomplete. A Security Information and Event Management (SIEM) system aggregates logs from across the environment and surfaces anomalies – unusual login times, access requests from unexpected locations, data volumes that do not match normal behaviour. The goal is not just to detect breaches after they happen, but to catch the indicators that precede them.

Zero trust is not a destination. It is a direction. The question is not whether your organisation is fully zero trust – almost none are. The question is whether you are moving toward it, and whether you know where your current exposure sits.

If you want the practical side – the platforms, the hardware manufacturers, the cellular network controls, and how a zero trust architecture actually gets assembled at the edge – that is covered in a separate piece: Zero Trust Networking: A Practical Guide to Vendors, Platforms, and Edge Hardware. But that is a different article. This one is about the logic underneath it all.

What makes zero trust genuinely interesting is not the technology. It is how precisely the framework maps onto the way trust actually works between people.

Consider the parallel. In professional environments, we often extend trust by default to people who have been granted access – to a team, a system, an organisation. The assumption is that because they are here, because they have been let in, they can be trusted. Their presence inside the perimeter is treated as sufficient validation.

That assumption fails for exactly the same reasons it fails in networks. Access is not the same as alignment. Being inside the organisation does not mean a person’s interests, values, or intentions are consistently aligned with it. And the failure mode – when that misalignment surfaces – is always worse when it was never anticipated, because there were no controls in place to limit the damage.

Zero trust as a human principle does not mean paranoia. It does not mean treating colleagues as suspects or refusing to rely on anyone. It means something more precise: that trust should be proportionate, contextual, and continuously re-evaluated against evidence rather than granted wholesale and left unchecked.

It means the access you grant should match what is actually needed. Not more. Not on the assumption that broader access signals confidence or respect.

It means that a change in behaviour – inconsistency between what someone says and what they do, a shift in how they communicate, a pattern that does not match the baseline – is worth paying attention to. Not as a reason to assume the worst, but as a reason to re-verify.

And it means building systems – in teams, in organisations, in relationships – where a single point of failure cannot take everything down. Where the integrity of the whole does not depend on the perfect behaviour of any one node.

I have spent a long time thinking about trust. A fair amount of that education arrived early and uninvited. The instinct not to trust – to verify rather than assume, to watch behaviour rather than take presence at face value – was not something I developed professionally. It was installed much earlier, in childhood, by the actions of other people. Not by my own decisions. By theirs.

That kind of early education leaves a mark. It shapes how you read people, how much access you extend, how long it takes you to believe that what someone says and what they will do are actually the same thing. In some ways it is useful. In others it is a weight. But it does give you a particular clarity about what trust actually costs – because you learned the price before you learned the word.

Professionally, the infrastructure I work with every day depends on trust being properly calibrated. Devices authenticating to networks, systems verifying identities, architectures designed to assume that any component could fail or be compromised. The engineering is rigorous because the cost of getting it wrong is measurable and immediate.

Personally, broken trust is not a clean event. It propagates. It does not respond to a patch or a configuration change. Repairing it is slow, requires honesty from both sides, and only works if the conditions that broke it are actually changed – not just acknowledged and left in place.

The zero trust model, in its most human application, is this: do not assume that because someone has always been trustworthy, they always will be. Do not assume that because someone has access, they share your values. Verify. Pay attention to behaviour, not just credentials. And when something changes – when the baseline shifts – address it directly rather than hoping it resolves itself.

Trust is not a fixed asset. It is a live system that requires maintenance, monitoring, and honest re-evaluation. In networks and in life, the organisations and relationships that treat it as such are the ones that survive a breach intact. The ones that do not usually discover the weakness until after the damage is already done.

I am still working on trust. I expect I always will be.

This article by Nick Appleby examines zero trust – the security architecture built on the principle of never trusting, always verifying – as both a technical framework and a model for how trust functions in human systems. It covers the failure of the perimeter security model, the core principles of zero trust as defined by NIST, the application of zero trust to IoT and edge deployments, and the parallel between network access control and the way trust operates in professional and personal relationships. Key arguments include the distinction between access and alignment, the asymmetric nature of trust (earned incrementally, lost suddenly), and the principle that trust is a live system requiring continuous re-evaluation rather than a fixed assumption. A companion technical article covers zero trust vendors, platforms, and edge hardware in depth.